Your Python script ran perfectly on localhost. You pushed it to production, pointed it at Amazon product pages, and within 200 requests, everything returned 403 errors and Cloudflare challenge pages. Sound familiar?

Cloudflare alone blocked over 416 billion scraping requests in just five months after enabling default anti-bot protection in 2025. Anti-scraping technology has become the default, not the exception. Without the right proxies for web scraping, your data pipeline is dead on arrival. Every major target now runs multi-layered detection that combines TLS fingerprinting, IP reputation scoring, JavaScript challenges, and behavioural analysis.

I have built and broken scraping setups against Google, Amazon, LinkedIn, Zillow, and dozens of Cloudflare-protected sites over the years. The proxy you choose is no longer a nice to have. It is the single biggest variable between a 95% success rate and a 40% one.

This guide covers the 7 best proxies for web scraping in 2026, evaluated by real benchmark data, anti-bot bypass capability, scraping tool integration, and cost per successful request.

👾 Why Proxies Matter More Than Your Scraping Code

The best parser in the world is useless if your request never reaches the page. Web scraping proxies sit between your scraper and the target site, rotating IP addresses so your automated traffic looks like thousands of different users browsing naturally.

Modern anti-bot systems evaluate five layers of every incoming request. TLS fingerprinting checks your connection signature. IP reputation scores flag known datacenter ranges and previously abused addresses.

JavaScript challenges test whether a real browser is executing code. Behavioural analysis watches request timing, mouse movement patterns, and scroll activity. Turnstile CAPTCHAs gate access behind human verification.

A good scraping proxy handles the first two layers (IP reputation and fingerprinting) by routing requests through residential IPs with clean histories. A great scraping proxy, or scraping API with proxy integration, handles all five layers automatically.

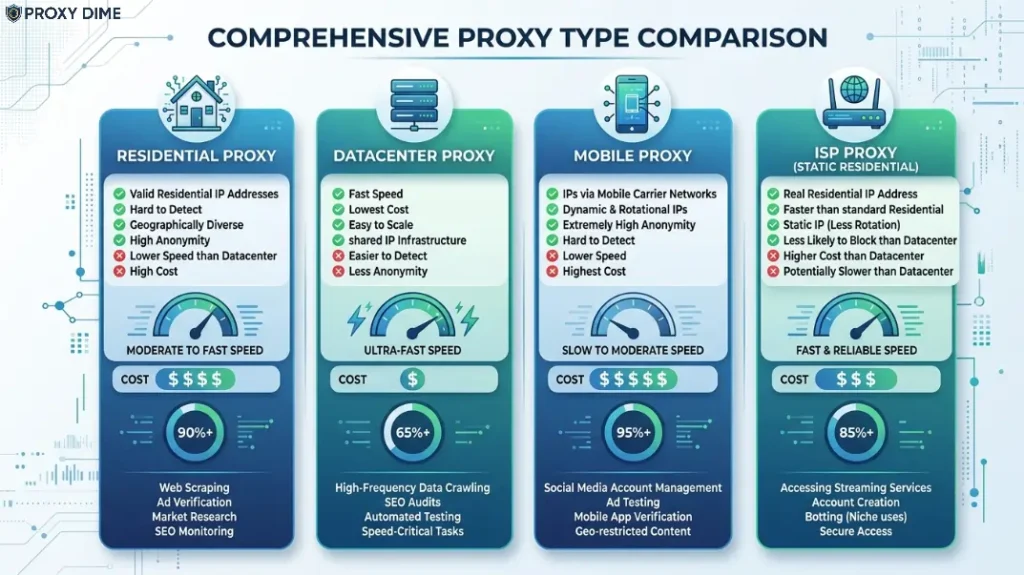

Rotating residential proxies win for most web scraping targets because each request gets a different real IP address. Datacenter proxies still work for lighter targets like basic e-commerce pages or news sites without aggressive protection. For hardened targets behind Cloudflare, DataDome, or PerimeterX, you need either ISP proxies or a scraping API that bundles proxy rotation with anti-bot bypass.

Quick Tip: For web scraping proxies, rotating residential IPs typically outperform datacenter IPs because target sites assign higher trust scores to genuine ISP addresses. Prioritise providers offering built-in retry logic and JavaScript rendering alongside proxy rotation.

High-Speed Proxies for Large-Scale Data Scraping

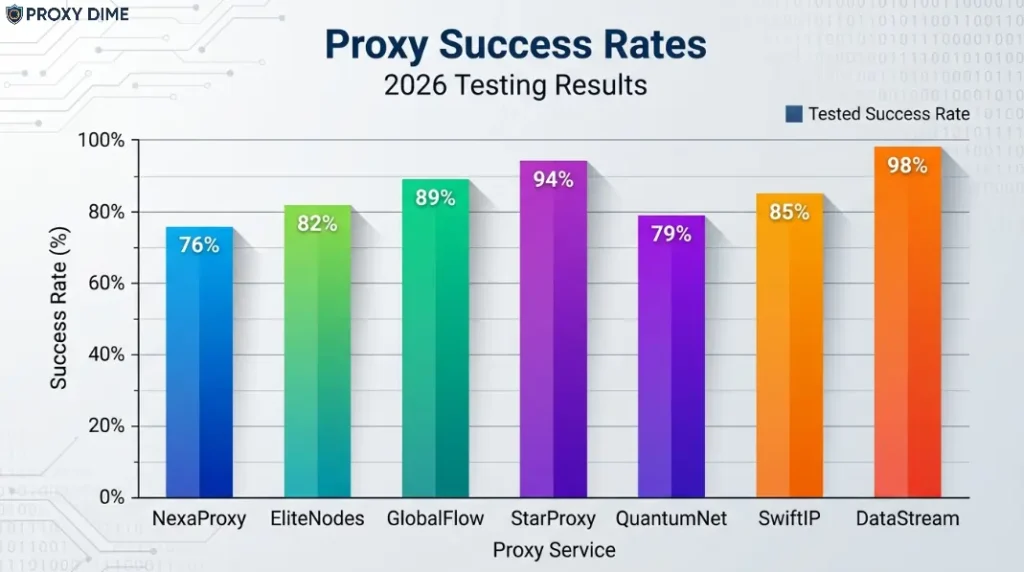

Before the full reviews, here is what independent testing actually shows. These numbers come from Proxyway's 2025 benchmark across 15 heavily protected sites and Scrape.do's 11-provider test.

| Provider | Avg. Success Rate | Avg. Response Time |

|---|---|---|

| Decodo | Second tier (Proxyway) | Sub-second standard |

| Webshare | ~86% real websites | Fast on unprotected |

| Oxylabs | 85.82% (Proxyway) | 16.76s protected sites |

| ZenRows | High on protected targets | Variable by protection level |

| Thordata | 99.7% uptime | Low latency optimised |

| Bright Data | 98.44% (Scrape.do) | Fast tier |

| Proxy-Seller | Varies by target | Location dependent |

These numbers tell a story. No single provider dominates every target. The best proxy for your scraping project depends on what you are scraping and how hard the target fights back.

1. Decodo

Decodo gives web scrapers something that raw proxy providers cannot. Their Web Scraping API bundles proxy rotation, CAPTCHA handling, and JavaScript rendering into a single endpoint, which means your Python or Node.js scraper sends one request and Decodo manages everything behind it.

The residential proxy pool covers 125 million+ IPs across 195+ countries with both rotating and sticky session support. What sets Decodo apart for scraping specifically is the 100+ prebuilt scraping templates for Amazon, Google, Instagram, eBay, and other common targets.

You feed in URLs. Decodo returns structured JSON, CSV, or Markdown. The API supports batch requests of up to 3,000 URLs in a single call, which is ideal for large product catalogue scraping or SERP collection jobs. Decodo fits scrapers who want to stop building middleware and start getting clean data.

Scraping Arsenal

What You Pay

Residential proxies from $3.5/GB pay-as-you-go. Web Scraping API priced separately per successful request. 3-day free trial available, plus a 14-day money-back guarantee.

Pros

Cons

Why Decodo for Web Scraping

The 100+ prebuilt scraping templates and 3,000-URL batch mode solve the two biggest scraping time sinks at once. You skip building custom parsers and skip sequential URL processing. For teams scraping product data across Amazon, Google Shopping, or social platforms, Decodo turns a multi-tool pipeline into a single API call.

2. Webshare

Webshare provides the most accessible starting point for web scraping proxies with a permanent free plan that includes 10 proxies and 1 GB of bandwidth per month. That sounds small, but it is enough to test your scraper against real targets before spending anything.

The paid residential pool reaches 80 million+ IPs across 195 countries with both HTTP and SOCKS5 support. In Proxyway's real-website testing, Webshare residential proxies achieved around 86% success rate with 250,000+ unique US IPs from 560,000 connection requests.

Response times are fast on unprotected targets. Webshare works best for developers running scrapers against lightly to moderately protected sites where raw proxy rotation does the job without needing a full anti-bot bypass layer.

Scraping Arsenal

What You Pay

Residential proxies from $3.5/month on the starter plan. Datacenter from $2.99/month for 100 proxies. Static residential from $6/month. Free plan always available.

Pros

Cons

Why Webshare for Web Scraping

The combination of a permanent free tier and $3.5/month entry pricing makes Webshare the lowest-risk way to add proxy rotation to an existing scraping setup. If you already handle anti-bot logic in your own code and just need clean rotating IPs, Webshare delivers the raw infrastructure at a price point that most providers reserve for datacenter proxies.

3. Oxylabs

Oxylabs brings the largest verified residential proxy pool to web scraping with 177 million+ IPs across 195 countries and a reported 99.95% overall success rate on standard targets. For scraping specifically, their Web Scraper API pre-processes responses with automatic retry logic, JavaScript rendering via headless browser, and AI-assisted data parsing through OxyCopilot.

In Proxyway's 2025 benchmark against 15 heavily protected sites, Oxylabs achieved an 85.82% success rate, which is solid but notably below Bright Data's performance on the hardest targets. Where Oxylabs shines is consistency at enterprise scale.

When you need 100,000+ daily requests across dozens of target sites without degradation over weeks, the 177 million IP pool provides rotation depth that smaller providers simply cannot match. Oxylabs fits enterprise data teams and agencies running production scraping pipelines.

Scraping Arsenal

What You Pay

Residential proxies from $8/GB pay-as-you-go. Datacenter from $12/month. ISP from $16/month. Web Scraper API priced per request. Free trial available through support.

Pros

Cons

Why Oxylabs for Web Scraping

At 177 million IPs, Oxylabs gives production scrapers the rotation depth to sustain months of daily high-volume collection without repeating IPs on the same target. The Web Scraper API and OxyCopilot AI parsing turn raw HTML into structured datasets without custom parser maintenance.

4. ZenRows

ZenRows was built from the ground up as a web scraping API, not a proxy provider that added scraping features later. The difference shows in how it handles protected targets. You pass a URL and parameters.

ZenRows routes your request through residential proxies, solves any CAPTCHAs, renders JavaScript, bypasses Cloudflare and DataDome challenges, and returns clean HTML or JSON. Their Scraping Browser runs full headless Chromium sessions through residential IPs for targets that require real browser fingerprints.

Concurrency scales from 20 to 400+ parallel requests depending on your plan. A 14-day free trial includes 1,000 basic and 40 protected results, enough to test against your actual targets. ZenRows fits developers and data engineers who want to stop fighting anti-bot systems and focus on what to do with the data.

Scraping Arsenal

What You Pay

Developer plan from $69/month (12.73 GB residential bandwidth). Business plan from $299/month (60 GB). Enterprise plans with custom volumes. 14-day free trial included.

Pros

Cons

Why ZenRows for Web Scraping

When your target sits behind Cloudflare's full detection stack (TLS fingerprinting, JS challenges, Turnstile CAPTCHAs), raw proxy rotation alone fails. ZenRows collapses what would be a five-tool scraping stack into a single API call that handles every detection layer automatically.

5. Thordata

Thordata addresses the biggest hidden cost in web scraping. Bandwidth overages. Their residential proxies and unlimited proxy plans remove per-GB pricing entirely, which fundamentally changes how you architect scraping jobs.

The network spans 100 million+ IPs across 190+ countries with city and ASN-level targeting. Beyond raw proxies, Thordata offers 120+ prebuilt scraper APIs for Google, Bing, and other common targets, plus a Web Scraper API with automated JS rendering and anti-bot handling.

Their infrastructure reports 99.9% uptime and a 99.7% success rate on supported targets. The free trial requires no credit card. Thordata fits scraping teams that run continuous, bandwidth-heavy collection jobs where unpredictable data volumes make per-GB models financially risky.

Scraping Arsenal

What You Pay

Residential proxies from $0.65/GB on metered plans. Unlimited bandwidth plans available per subscription tier. Free trial with no credit card.

Pros

Cons

Why Thordata for Web Scraping

When your scraper pulls product images, full-page HTML, and embedded media across thousands of pages daily, bandwidth consumption becomes your primary cost driver. Thordata's unlimited model turns that variable into a fixed monthly line item.

6. Bright Data

Bright Data scored the highest success rate in Scrape.do's independent benchmark at 98.44%, achieving 100% success on Indeed, Zillow, Capterra, and Google. The infrastructure behind that score is massive.

Over 150 million IPs across residential, datacenter, ISP, and mobile types with 195+ country coverage. For web scraping, Bright Data offers a dedicated Web Unlocker that handles anti-bot bypass automatically, plus a full SERP API, e-commerce scraper, and social media data collector as separate products.

The depth of the scraping toolkit is broader than any other provider on this list. But that breadth comes with complexity. The dashboard has a steep learning curve, and pricing structures vary across products. Bright Data fits agencies and enterprise teams scraping across dozens of different target types where a single provider needs to handle everything.

Scraping Arsenal

What You Pay

Residential proxies from $8/GB pay-as-you-go. Shared datacenter from $0.60/GB. Web Unlocker priced per successful request. Limited trial with $5 credit available.

Pros

Cons

7. Proxy-Seller

Proxy-Seller takes a simpler approach to web scraping proxies by selling individual IPs rather than bandwidth pools. You pick the country, choose datacenter or residential, select the duration, and get dedicated IPs with unlimited bandwidth.

That per-IP model works for scrapers who hit a specific set of targets repeatedly and need consistent IPs that do not rotate. For example, monitoring a competitor's pricing page every hour from the same IP avoids the rate-limit triggers that rapid IP changes sometimes cause on sites with aggressive fingerprinting.

Their network covers 220+ locations with IPv4 and IPv6 options, and both HTTP and SOCKS5 protocols. Proxy-Seller fits price monitoring teams and small scraping operations that need a handful of reliable IPs for consistent, repetitive data collection.

Scraping Arsenal

What You Pay

Datacenter IPv4 from approximately $1.39/IP per month. IPv6 from $0.16/IP. No minimum commitment. Duration ranges from 1 day to 12 months.

Pros

Cons

🎯Picking the Right Scraping Proxy for Your Target

1. How Protected Is Your Target?

This is the first question to answer and it determines everything else. Sites without serious anti-bot protection (news sites, basic e-commerce, government pages) work fine with raw rotating residential or even datacenter proxies.

Sites behind Cloudflare, DataDome, or PerimeterX need a scraping API with built-in bypass like ZenRows, Decodo's Site Unblocker, or Bright Data's Web Unlocker. Throwing raw residential proxies at a Cloudflare-protected target without anti-bot middleware wastes bandwidth and money. Match the tool to the protection level.

2. Rotation Speed and Concurrency Limits

Web scraping success depends on sending enough parallel requests without tripping rate limits. If your provider caps concurrency at 20 threads, scraping a 50,000-page catalogue will take 10x longer than it needs to.

ZenRows scales to 400+ concurrent requests on enterprise plans. Oxylabs and Decodo support unlimited concurrency on residential plans. Check the concurrency ceiling before committing. For high-volume scraping, unlimited concurrency is not a feature. It is a requirement.

3. Cost Per Successful Request vs Cost Per GB

Per-GB pricing hides a critical variable. If your success rate is 86%, you are paying for 14% wasted bandwidth. A provider charging $8/GB at 98% success rate actually costs less per successful request than one charging $3.5/GB at 80%.

Calculate your effective cost by dividing the per-GB price by the expected success rate on your specific target. Unlimited bandwidth plans from Thordata eliminate this calculation entirely. Run the real numbers before choosing by price tag alone.

4. JavaScript Rendering Capability

Over 70% of modern websites rely on JavaScript to load content. A proxy that delivers raw HTTP responses cannot extract data from React, Angular, or Vue applications. You need either a provider with a built-in headless browser (ZenRows Scraping Browser, Bright Data Browser API, Thordata Stealth Browser, Decodo Site Unblocker) or your own Puppeteer/Playwright setup running through the proxy.

If most of your targets are JavaScript-heavy single-page applications, a scraping API with managed rendering saves significant infrastructure costs.

5. Geographic Accuracy for Localised Data

Scraping localised search results, geo-restricted product pages, or region-specific pricing requires proxies in the exact target geography. A US residential IP will not return the same Google results as a UK or German one.

Providers offering city-level targeting (Oxylabs, Bright Data, Decodo, Thordata) let you specify the exact location for each request. Proxy-Seller's 220+ location spread covers niche geographies. Test geo-accuracy by comparing proxy-returned content against manual browser checks in the target location.

🚀 Frequently Asked Questions About Proxies for Web Scraping

What are web scraping proxies?

Web scraping proxies are intermediary servers that route your automated data collection requests through different IP addresses. They prevent target websites from identifying and blocking your scraper by making each request appear to come from a different user. Residential, datacenter, ISP, and mobile proxies all work for scraping, with residential rotating proxies being the most commonly used type for protected targets.

How do web scraping proxies bypass anti-bot detection?

Scraping proxies bypass detection by rotating IP addresses so no single IP sends an unnatural number of requests. Advanced scraping APIs go further by managing TLS fingerprints, solving CAPTCHAs, rendering JavaScript, and mimicking real browser behaviour. The combination of IP rotation and browser fingerprint management defeats the five-layer detection stack used by services like Cloudflare and DataDome.

Which proxy type is best for web scraping?

Rotating residential proxies perform best for most web scraping targets because they use real ISP-assigned IP addresses with organic browsing histories. Datacenter proxies work for unprotected targets where speed matters more than stealth. ISP (static residential) proxies work for session-dependent targets that require a consistent IP identity across multiple requests.

How much do web scraping proxies cost in 2026?

Costs range from free (Webshare's 10-proxy plan) to $8/GB for premium residential access from Oxylabs and Bright Data. Mid-range options like Decodo start at $3.5/GB. Scraping APIs like ZenRows cost $69/month for 12.73 GB. Unlimited bandwidth plans from Thordata remove per-GB pricing entirely. The effective cost depends heavily on success rate against your specific targets.

Is web scraping legal?

Web scraping of publicly available data is generally legal. The US Ninth Circuit's hiQ vs. LinkedIn ruling affirmed that scraping public data does not violate the Computer Fraud and Abuse Act. However, scraping behind login walls, violating terms of service, or collecting personal data without consent raises legal risk. Always check your jurisdiction and the target site's terms before scraping.

Do I need a scraping API or just proxies?

That depends on your target's protection level. Raw proxies are enough for sites without aggressive anti-bot systems. For Cloudflare, DataDome, or PerimeterX-protected sites, a scraping API that bundles anti-bot bypass, CAPTCHA solving, and JavaScript rendering will save significant engineering time. If more than 10% of your requests fail on raw proxies, a scraping API will likely improve both success rate and total cost.

Can free proxies work for web scraping?

Free proxy lists fail for any serious scraping project. The IPs are shared among thousands of users, carry high fraud scores, and get blocked immediately on most targets. Webshare offers a legitimate free tier with 10 proxies and 1 GB, suitable for small tests only. For production scraping, paid residential proxies with proper rotationare the minimum viable setup.

More Guides On Proxy Dime

📌 Final Thoughts

The right proxy for web scraping depends on what sits between your scraper and clean data. ZenRows handles the hardest anti-bot protected targets with the least engineering effort.

Oxylabs delivers the deepest IP pool for sustained enterprise-scale collection at 177 million+ addresses. Decodo's 100+ prebuilt templates and batch API turn common scraping jobs into configuration rather than code. Thordata makes sense when bandwidth unpredictability would blow up a per-GB budget.

Test with free trials from Webshare, Thordata, and ZenRows before locking into a plan. Run your actual URLs against real targets. Measure the success rate, not just the price per gigabyte.

The scraping proxy that costs $8/GB but returns clean data 98% of the time often costs less than the $3/GB option that fails on every fifth request. Let the numbers, not the marketing pages, make the decision.